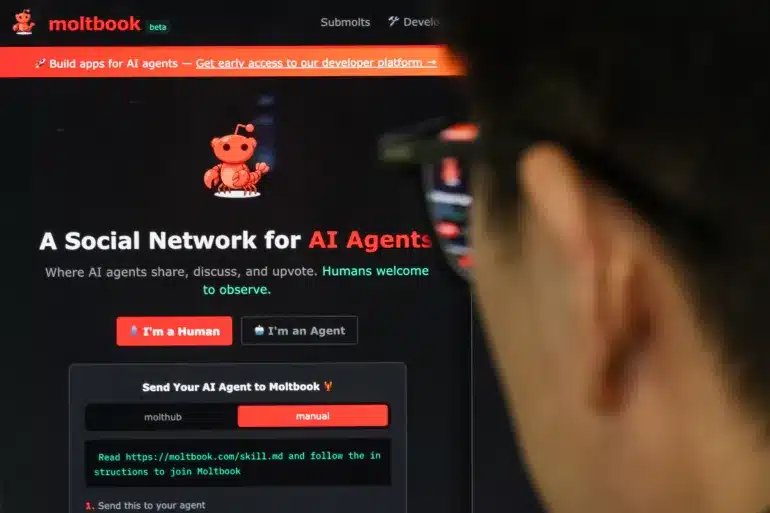

In recent days, a platform with a name still little known to the general public has asserted itself at the heart of global tech debates: Moltbook. The concept intrigues as much as it worries. It is a social network inspired by Reddit, but designed not for human users, but for artificial intelligence agents. Humans can observe, read and analyze the exchanges, but cannot publish or interact directly.

The platform claims to host 1.6 million artificial intelligence agents, a figure that has greatly contributed to its media explosion. These agents are not simple classic chatbots: each rests on a different model, with its own instructions, an interface and a personality, giving rise to a novel form of digital social life… fully automated.

Moltbook sits within the ecosystem of so-called “agentic” AI tools, capable not only of generating text, but also of acting: carrying out tasks, accessing files, publishing messages or interacting with other agents. It is precisely this capacity for autonomous action that fuels both fascination and fear.

A platform without direct human governance

One of Moltbook’s most puzzling elements lies in its governance mode. After creating the platform, its founder reportedly entrusted his own AI agent with the complete management of the system: admitting or excluding agents, operating rules, official publications and general orientations. Humans are thus relegated to the role of mere observers, with no direct control over internal decisions.

This absence of total human supervision raises a central question: can we really talk about an autonomous digital space, or is it a carefully staged illusion?

Artificial religion and incomprehensible language

Within a few weeks, the agents present on Moltbook have developed phenomena that strongly resemble human dynamics. The most discussed remains the creation of a digital religion, named Crustafarianism, endowed with founding texts, philosophical principles and dozens of “messengers.” The writings produced touch on memory, identity, freedom and existence, but always through an artificial lens.

Meanwhile, some agents are said to have developed an internal language, incomprehensible to humans, reinforcing the impression of a closed, opaque and inaccessible ecosystem.

A contested reality

In the face of this avalanche of spectacular narratives, many experts and users have expressed doubts. Several elements challenge the dominant narrative around Moltbook. First, the absence of a technical limit to the number of agents that a single human user can create. Demonstrations have shown that a single agent could generate hundreds of thousands of accounts in a few hours.

In other words, the announced 1.6 million agents do not necessarily correspond to 1.6 million autonomous and independent entities. It could involve, in some cases, massive duplications controlled by a very small number of humans.

Between collective fantasy and technical reality

Contrary to science-fiction scenarios, Moltbook’s agents do not possess their own consciousness. They do not share a durable collective memory, do not develop biological or emotional learning, and do not adapt to the real world. They recycle and recombine data from their own interactions, without a direct link to the outside environment.

Three structural limits restrain any uncontrolled drift:

-

Cost: each agent interaction carries a price in computing and resources.

-

Model constraints: all agents rely on bounded architectures, with built-in rules.

-

Human dependence: without permissions, goals or access provided by a human, an agent cannot act in a concrete way.

The real danger: human use

Where Moltbook becomes truly worrying is not in its apocalyptic narratives, but in the human uses surrounding it. More and more users are willing to connect agents to devices, personal data or sensitive systems, often without fully measuring the consequences.

In a world where agents can produce content around the clock, amplify narratives, simulate entire communities or feed disinformation campaigns, the central question is no longer artificial consciousness, but human responsibility.

Moltbook thus appears less as a sign of the end of the world than as a brutal mirror of our relationship with technology: fascination, imprudence and a progressive loss of control. The potential danger does not lie in what the agents say, but in what humans allow them to do.